We offer solutions

Data creates benefits and reduces costs, can predict energy requirements in industrial plants or adapt the behavior of IT applications to measured user experiences.

How we achieve our goal

We work closely with data from the start. 80 percent of the effort for a data product lies in extracting, preparing, merging and transforming it. We rely on data pipelines to automate the effort appropriately.

Our key

We provide, analyze and visualize data. With Data Analytics we understand chains of effects, with Data Science we recognize patterns and are able to make predictions. With MLOps, we integrate data science workflows seamlessly and automatically into your corporate IT.

Build data products correctly with us

We overcome hurdles

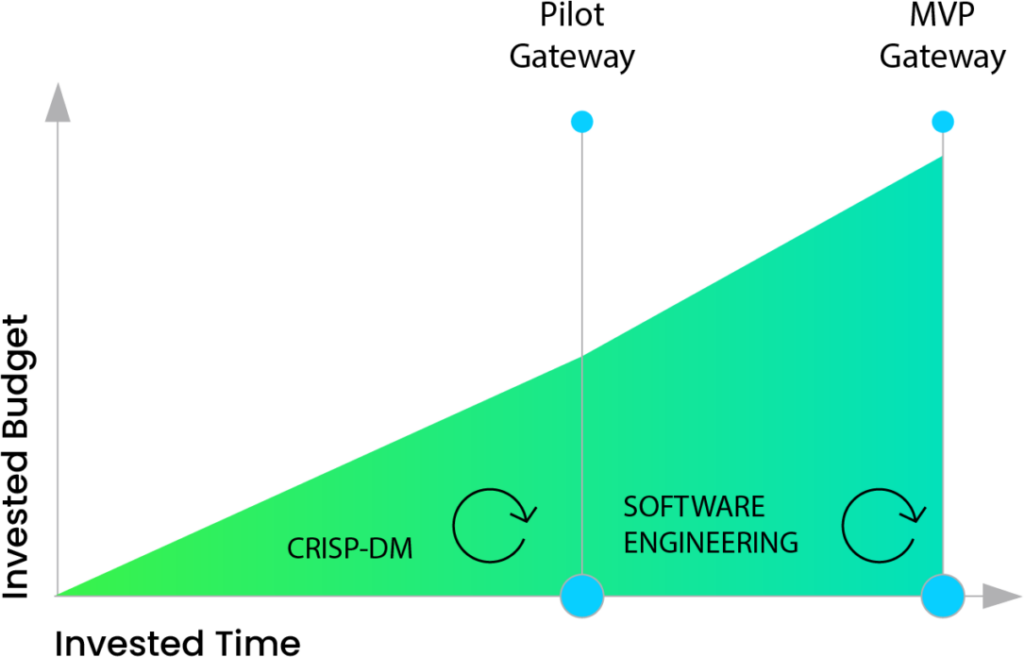

Two major hurdles impede the path to a successful data product: the pilot trap and the engineering hurdle. Pilot phases often run too long and get stuck in the conceptual. Once the MVP is supposed to go live, the team finds itself in a complex software engineering environment, which inhibits progress. We therefore proceed like this:

Pilot Gateway

Through methodically secure cycles based on CRISP-DM, we evaluate the feasibility of a data product closely and in the shortest possible time. Early on, we manage the expectations of the benefits of the project and provide you with the basis needed for making the go or no-go decisions.

MVP Gateway

We design and manage the development and deployment of an MVP following a successful pilot primarily as a data-driven software engineering project. Here, we rely on our many years of expertise as a proven DevOps partner for small and large individual systems.

DATA

ENGINEERING

Integrate and transform data

It all starts with

the right data

About 80 percent of the work for coming up with a viable data product lies in extracting, preparing, merging, and transforming the data. You don’t want to expend this work manually every time, but instead aim for an appropriate level of automation within the framework of robust data pipelines.

Data extraction, transformation, and loading

Classic ETL for data warehouses or ELT for data lakes, structured, semi-/unstructured, or stream-based data, in batches or in real-time.

Data modeling and storage

From raw data stores, single-node databases, and Big Data to evaluation-oriented data representation in classic schemes or agile data vault modeling.

Cyber-security, data protection and ethics

Securing against attacks, VIVA, GDPR, procedural and technical anchoring of data sources, data ownership, data usage and data sovereignty in productive data pipelines.

Our projects

Where we use data engineering

Global B2C data platform:

As a DevSecOps partner, we provide mobility data for 25 markets and many millions of vehicles for further evaluation.

Enterprise-wide data warehouse:

At the interface between product development and sales, we provide central product and market data.

IoT data hub:

For an installed base of over 200,000 devices, we collect and deliver the necessary data for smart services in real time.

DATA

ANALYTICS

Analyze and visualize data

We ease the burden

on your users

The data relevant to an application context must be provided, analyzed and visualized. We distinguish between reporting and explorative analysis. The many recurring tasks must be robustly automated. This allows your users to concentrate on interpreting reproducible results.

Data provision

Avoid vendor lock-ins with flexible and modular interfaces that provide enterprise-wide as well as domain-specific master and transaction data.

Reporting

Integrate analytical tools from open-source components, cloud services, or custom developments to continuously update KPIs, reports, and dashboards.

Exploratory analysis

Deploy and configure analytical playgrounds with high degrees of freedom to explore and store new context and metrics in self-service.

Our projects

Where we analyze data

Experience management:

We are equipping a global platform for location-based services with functions that capture and analyze customer experiences.

Resource optimization in production:

Based on comprehensive sensor data from the shop floor, we identify optimization potential for resource utilization.

Crowd sourcing for traffic route planning:

Using our award-winning platform IoT-Bike, we analyze the degree of use and quality of municipal bike path networks.

Networking of solar power systems:

We develop descriptive analysis models for the energy management of industrial-scale photovoltaic systems.

DATA

SCIENCE

Recognizing patterns in data

We make robust predictions

Data analytics help us to better understand chains of effects in the past and present. With Data Science, we recognize recurring patterns and are able to make predictions. With the right pre-processing to appropriately smooth the data volatility of the application domain, we keep predictions robust, reliable, and trackable. With MLOps, we seamlessly integrate data science workflows into your enterprise IT in an automated way.

Hypotheses and baseline

Design thinking workshops for multi-dimensional problem analysis and the derivation of hypotheses. Joint selection and definition of suitable decision criteria.

MLOps life cycle

Selection of appropriate ML platforms and AI services. Automation of data science workflows with versioned models, data, and environments. Reproducible DevOps integration into your enterprise IT.

Quality, concept/data drift, and explainability

Objective testing procedures to validate your models, scientifically proven compensation concepts, and whitebox-driven traceability of predictions.

Our projects

Where we use data science

Enterprise-wide MLOps platform:

We empower our clients’ data scientists to carry out model and data management through to automated deployments.

AI-based access control:

Using biometric and telematics factors, we make secure access control a seamless part of the customer experience.

Immersive user experience:

We evaluate, build and integrate state-of-the-art components for gesture-driven human-machine interaction.

Cobot-based user interfaces:

We develop cobot-based workstations in production and secure them using automated, end-to-end test frameworks.

“One tool alone does not solve a problem. With data-driven tools, we need an end-to-end data strategy.”

Konrad Schreiber, Deputy Head of Data and AI, MaibornWolff

Get to know us

A good start

Every project starts with a first step.

Data Thinking Workshops

You develop data products and create concrete next steps and roadmaps with us. Our Digital Designers, Data Scientists and Impulsgeber:innen work hand in hand with you. We design the workshops on the basis of a preliminary discussion. They last two to three days and take place in our facilitator rooms, remotely or on your premises.

Cognitive Services

We use proofs of concept to test whether individual cognitive services are suitable for your use case: How well can your PDFs be processed automatically, how can your audio streams be transcribed for analysis? Together we identify requirements (“When is good good enough?”) and test the suitability of the cognitive service using representative system data. Often we can evaluate on our subscription. Quickly and securely.

Maturity Assessment

Do you have a data strategy? We assess the maturity of your organization, processes, data and tools. With you, we design measures to efficiently develop and operate data products. We highlight best practices, jointly assess their current maturity level and identify top initiatives. We work with you to develop a data strategy. Tailored to your needs.

Our open positions

Want to help develop

digital products?

In our teams, people with different skills work together. Check out if you want to contribute your knowledge to our team.

Learn more